Test Suite: Difference between revisions

Paul Wouters (talk | contribs) No edit summary |

(formatting table) |

||

| (88 intermediate revisions by 5 users not shown) | |||

| Line 1: | Line 1: | ||

== Nightly Test Tesults == | |||

Libreswan | Libreswan's testsuite is run nightly. The results are published [https://testing.libreswan.org/ here], with the most recent result [https://testing.libreswan.org/current here]. The tests are categorized as: | ||

The | |||

* good: these tests are expected to pass (unfortunately, some still have timing problems and occasionally fail) | |||

* wip: these tests require further work, for instance the result may not be deterministic, or the bug they demonstrate hasn't yet been fixed | |||

* skiptest: these tests require manual intervention to run | |||

To run tests locally, read on. | |||

To | |||

== Running tests == | |||

== Running | |||

The libreswan tests, in testing/pluto, can be run using several different mechanisms: | |||

{| class="wikitable" | |||

< | |+ Test Frameworks | ||

! Framework | |||

< | ! Speed | ||

! Host OS | |||

! Guest OS | |||

! initsystem testing <br> (systemd, rc.d, ...) | |||

! Post-mortem | |||

! Interop testing | |||

! Notes | |||

|- style="vertical-align:top;" | |||

| [[Test Suite - KVM | KVM]] | |||

| slower | |||

| Fedora, Debian <br>(BSD anyone?) | |||

| Alpine, Fedora, FreeBSD, NetBSD, OpenBSD, Debian | |||

| yes | |||

| shutdown, core, leaks, refcnt, selinux | |||

| strongswan (Linux, FreeBSD) <br> iked (OpenBSD) <br> racoon (NetBSD) <br> racoon2 (NetBSD) | |||

| <b>gold standard</b> <br> ideal for building on obscure platforms <br> idea for testing custom kernels <br> used by the [https://testing.libreswan.org Testing] machine <br> requires 9p (virtio anyone?) | |||

|- style="vertical-align:top;" | |||

| [[Test Suite - Namespace | Namespaces]] | |||

| fast | |||

| linux | |||

| uses host's libreswan, kernel, and utilities | |||

| no | |||

| core, leaks | |||

| strongswan (linux)? | |||

| ideal for quick tests <br> requires libreswan to be built/installed on the host <br> requires all dependencies to be installed on the host <br> test results sensitive differing kernel and utilities | |||

|- style="vertical-align:top;" | |||

| [[Test Suite - Docker | Docker]] | |||

| | |||

| linux | |||

| uses host's kernel <br> uses distro's utilities | |||

| ? | |||

| ? | |||

| ? | |||

| ideal for cross-linux builds (CentOS 6, 7, 8, Fedora 28 - rawhide, Debian, Ubuntu) <br> sensitive to differing kernel and utilities | |||

|} | |||

== | == How tests work == | ||

All the test cases involving VMs are located in the libreswan directory under testing/pluto/ . The most basic test case is called basic-pluto-01. Each test case consists of a few files: | All the test cases involving VMs are located in the libreswan directory under <tt>testing/pluto/</tt>. The most basic test case is called basic-pluto-01. Each test case consists of a few files: | ||

* description.txt to explain what this test case actually tests | * description.txt to explain what this test case actually tests | ||

| Line 414: | Line 64: | ||

* testparams.sh if there are any non-default test parameters | * testparams.sh if there are any non-default test parameters | ||

Once the test run has completed, you will see an OUTPUT/ directory in the test case directory: | |||

<pre> | <pre> | ||

| Line 451: | Line 80: | ||

* Any core dumps generated if a pluto daemon crashed | * Any core dumps generated if a pluto daemon crashed | ||

; testing/baseconfigs/ | |||

: configuration files installed on guest machines | |||

; testing/guestbin/ | |||

: shell scripts used by tests, and run on the guest | |||

; testing/linux-system-roles.vpn/ | |||

: ??? | |||

; testing/packaging/ | |||

: ??? | |||

; testing/pluto/TESTLIST | |||

: list of tests, and their expected outcome | |||

; testing/pluto/*/ | |||

: individual test directories | |||

; testing/programs/ | |||

: executables used by tests, and run on the guest | |||

; testing/sanitizers/ | |||

: filters for cleaning up the test output | |||

; testing/utils/ | |||

: test drivers and other host tools | |||

; testing/x509/ | |||

: certificates, scripts are run on a guest | |||

== | == Network Diagram == | ||

* interface-0 (eth0, vio0, vioif0) is connected to SWANDEFAULT which has a NAT gateway to the internet | |||

** the exceptions are the Linux test domains: EAST, WEST, ROAD, NORTH; should they? | |||

** the BSD domains always up inteface-0 so that /pool, /source, and /testing can be NFS mounted | |||

** NIC needs to run DHCP on eth0 manually; how? | |||

** transmogrify does not try to modify interface-0(SWANDEFAULT) (doing so would break established network sessions such as NFS) | |||

* the interface names do not have consistent order (see comment above about Fedora's interface-0 not pointing at SWANDEFAULT) | |||

** Fedora has ethN | |||

** OpenBSD has vioN (different order) | |||

** NetBSD has vioifN (different order) | |||

LEFT RIGHT | |||

192.0.3.0/24 -------------------------------------+-- 2001:db8:0:3::/64 (198.18.33) | |||

| | |||

2001:db8:0:3::254 | |||

192.0.3.254(eth0) | |||

ROAD NORTH | |||

192.1.3.209(eth0) 192.1.3.33(eth1) | |||

2001:db8:1:3::209 2001:db8:1:3::33 | |||

| | | |||

192.1.3.0/24 -----+----------------+--------------+-- 2001:db8:1:3::/64 (198.18.3 | |||

| | |||

2001:db8:1:3::254 | |||

192.1.3.254(eth2) | |||

NIC---(swan0)198.19/24 | |||

192.1.2.254(eth1) | |||

2001:db8:1:2::254 | |||

| | |||

192.1.2.0/24 -----------------+----+----------------------+----- 2001:db8:1:2::/64 (198.18.2) | |||

| | | |||

| 2001:db8:1:2::23 | |||

| 192.1.2.23 | |||

| (eth1) | |||

| 198.18.23.23/24(ipsec)--EAST--198.19/24(swan0?) | |||

| (eth0/2?) | |||

| 192.0.2.254 | |||

| 2001:db8:0:2::254 | |||

| | | |||

| | | |||

2001:db8:1:2::45 | | |||

192.1.2.45 | | |||

(eth1) | | |||

192.18.45.45/24(ipsec)--WEST--(swan0?)198.19/23 | | |||

(eth0/2?) | | |||

192.0.1.254 | | |||

2001:db8:0:1::254 | | |||

| | | |||

| TEST-NET-1 | |||

| 192.0.2.0/24 -------+---+-- 2001:db8:0:2::/64 (198.18.23) | |||

| | | |||

| 2001:db8:0:2::12/64 | |||

| 192.0.2.12/24 | |||

| (eth1) | |||

| 198.18.12.12/24(ipsec)--RISE--(swan0)198.19/16 | |||

| (eth2) | |||

| 2001:db8:1::12/64 | |||

| 198.18.1.12/24 | |||

| | | |||

192.0.1.0/24 -----------------+-+---- 2001:db8:0:1::/64 | (198.18.45) | |||

| | | |||

001:db8:0:1::15/64 | | |||

192.0.1.15/24 | | |||

(eth1) | | |||

192.18.15.15/24(ipsec)--SET--(swan0)198.19/16 | | |||

(eth2) | | |||

2001:db8:1::15/64 | | |||

198.18.1.15/24 | | |||

| | | |||

198.18.1.0/24 ------------------+-----------------------------+----- 2001:db8:1::/64 | |||

== Problems with the existing Network == | |||

The current network has a number of limitations. This section identifies those problems and proposes changes to address them: | |||

* the gateway had only 128 DHCP addresses | |||

* public networks are being used: 192.0.1.0/24 is owned by elevatedcomputing.com; 192.1.2.0/24 is owned by raytheon.com; 192.1.3.0/24 is owned by raytheon.com | |||

* 192.168.234.0/24 (gateway) is reserved for private use networks and known to clash with toronto airport | |||

* using public interfaces means that they can't be used in documentation | |||

* can't test two hosts where each is behind a gateway VPN | |||

see | |||

Ref https://www.rfc-editor.org/rfc/rfc5737 https://www.rfc-editor.org/rfc/rfc3849 https://www.rfc-editor.org/rfc/rfc6890 | |||

The suggestion is: | |||

* use the benchmarking network 198.18.0.0/15 | |||

* reserve 198.19/16 for the gateway | |||

* revive [sun]RISE (behind EAST) and [sun]SET (behind WEST) | |||

* reserve a number N for each machine / network: EAST: 23; WEST: 45; RISE: 123; SET: 145; NORTH: 33; NIC: 254 | |||

* use 198.18.2N.N/24 for IPsec Interfaces | |||

* use 192.18.1N.1N/24 for RISE and SET | |||

LEFT RIGHT | |||

198.18.254.0/24 ----------------------------------+-------------- 2001:db8:254::/64 | |||

| | |||

2001:db8:254::254 | |||

198.18.254.254 | |||

(eth0) | |||

ROAD NORTH | |||

198.18.3.209 198.18.3.33 | |||

(eth0) (eth1) | |||

2001:db8:3::209 2001:db8:3::33 | |||

| | | |||

198.18.3.0/254 --------+----------------+---------+---------------- 2001:db8:3::/64 | |||

| | |||

2001:db8:3::254 | |||

198.18.3.254 | |||

(eth2) | |||

NIC---(swan0)198.19/16 | |||

(eth1) | |||

198.18.2.254 | |||

2001:db8:2::254 | |||

| | |||

198.18.2.0/24 ----------------+---------+-----------------+-------- 2001:db8:2::/64 | |||

| | | |||

| 2001:db8:2::23 | |||

| 198.18.2.23 | |||

| (eth1) | |||

| 198.18.23.23/24(ipsec)--EAST--(swan0?)198.19/24 | |||

| (eth0/2?) | |||

| 192.0.2.23 | |||

| 2001:db8:0:2::23 | |||

| | | |||

2001:db8:2::45 | | |||

198.18.2.45 | | |||

(eth1) | | |||

192.18.45.45/24(ipsec)--WEST--(swan0?)198.19/23 | | |||

(eth0/2?) | | |||

192.0.1.45 | | |||

001:db8:0:1::45 | | |||

| | | |||

| TEST-NET-1 | |||

| 192.0.2.0/24 -------+---+-- 2001:db8:0:2::/64 | |||

| | | |||

| 2001:db8:0:2::12/64 | |||

| 192.0.2.12/24 | |||

| (eth1) | |||

| 198.18.12.12/24(ipsec)--RISE--(swan0)198.19/16 | |||

| (eth2) | |||

| 2001:db8:1::12/64 | |||

| 198.18.1.12/24 | |||

| | | |||

192.0.1.0/24 -----------------+-+---- 2001:db8:0:1::/64 | | |||

| | | |||

001:db8:0:1::15/64 | | |||

192.0.1.15/24 | | |||

(eth1) | | |||

192.18.15.15/24(ipsec)--SET--(eth0)198.19/16 | | |||

(eth2) | | |||

2001:db8:1::15/64 | | |||

198.18.1.15/24 | | |||

| | | |||

198.18.1.0/24 ------------------+-----------------------------+----- 2001:db8:1::/64 | |||

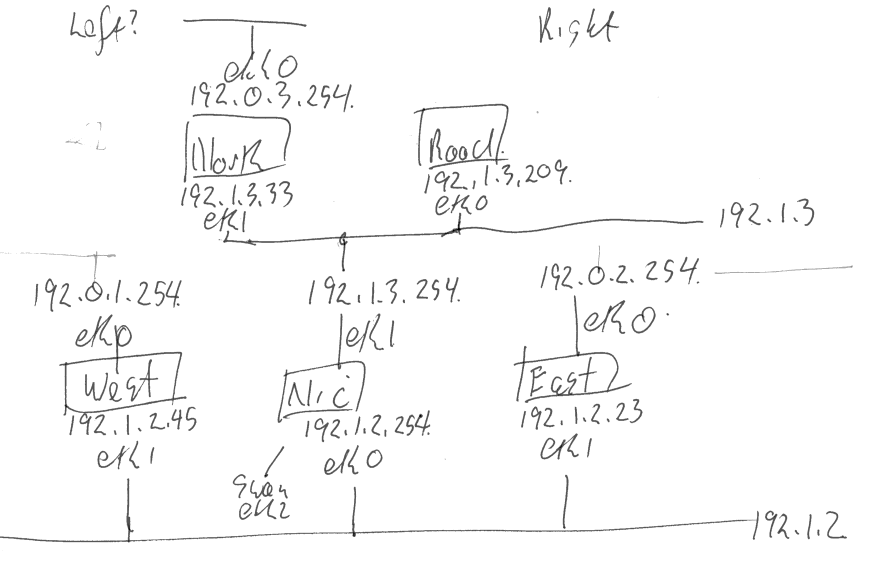

=== Hand Sketch of Current Network === | |||

[[File:networksketch.png]] | |||

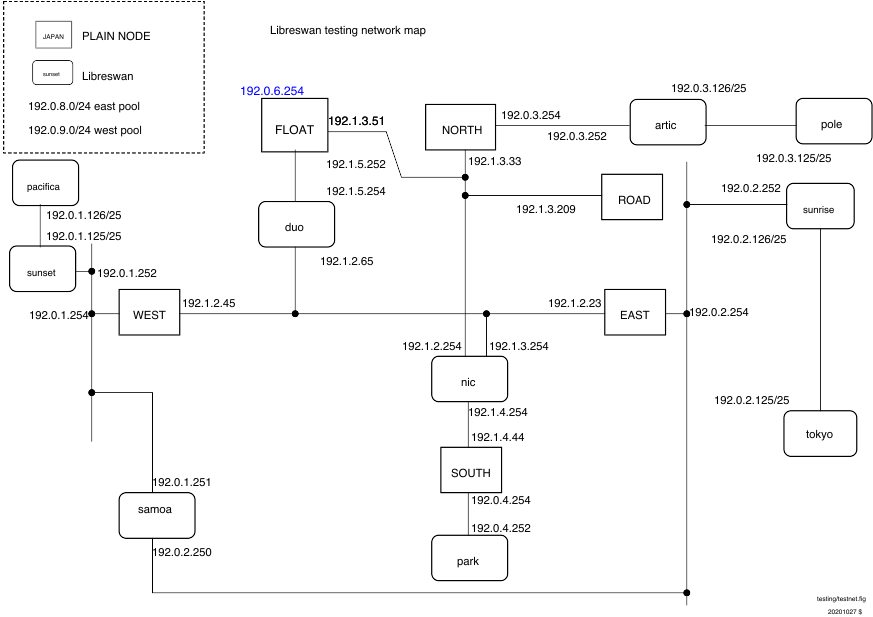

=== Original Network Diagram === | |||

[[File:testnet.png]] | |||

[[File: | |||

Latest revision as of 22:25, 7 May 2025

Nightly Test Tesults

Libreswan's testsuite is run nightly. The results are published here, with the most recent result here. The tests are categorized as:

- good: these tests are expected to pass (unfortunately, some still have timing problems and occasionally fail)

- wip: these tests require further work, for instance the result may not be deterministic, or the bug they demonstrate hasn't yet been fixed

- skiptest: these tests require manual intervention to run

To run tests locally, read on.

Running tests

The libreswan tests, in testing/pluto, can be run using several different mechanisms:

| Framework | Speed | Host OS | Guest OS | initsystem testing (systemd, rc.d, ...) |

Post-mortem | Interop testing | Notes |

|---|---|---|---|---|---|---|---|

| KVM | slower | Fedora, Debian (BSD anyone?) |

Alpine, Fedora, FreeBSD, NetBSD, OpenBSD, Debian | yes | shutdown, core, leaks, refcnt, selinux | strongswan (Linux, FreeBSD) iked (OpenBSD) racoon (NetBSD) racoon2 (NetBSD) |

gold standard ideal for building on obscure platforms idea for testing custom kernels used by the Testing machine requires 9p (virtio anyone?) |

| Namespaces | fast | linux | uses host's libreswan, kernel, and utilities | no | core, leaks | strongswan (linux)? | ideal for quick tests requires libreswan to be built/installed on the host requires all dependencies to be installed on the host test results sensitive differing kernel and utilities |

| Docker | linux | uses host's kernel uses distro's utilities |

? | ? | ? | ideal for cross-linux builds (CentOS 6, 7, 8, Fedora 28 - rawhide, Debian, Ubuntu) sensitive to differing kernel and utilities |

How tests work

All the test cases involving VMs are located in the libreswan directory under testing/pluto/. The most basic test case is called basic-pluto-01. Each test case consists of a few files:

- description.txt to explain what this test case actually tests

- ipsec.conf files - for host west is called west.conf. This can also include configuration files for strongswan or racoon2 for interop testig

- ipsec.secret files - if non-default configurations are used. also uses the host syntax, eg west.secrets, east.secrets.

- An init.sh file for each VM that needs to start (eg westinit.sh, eastinit.sh, etc)

- One run.sh file for the host that is the initiator (eg westrun.sh)

- Known good (sanitized) output for each VM (eg west.console.txt, east.console.txt)

- testparams.sh if there are any non-default test parameters

Once the test run has completed, you will see an OUTPUT/ directory in the test case directory:

$ ls OUTPUT/ east.console.diff east.console.verbose.txt RESULT west.console.txt west.pluto.log east.console.txt east.pluto.log swan12.pcap west.console.diff west.console.verbose.txt

- RESULT is a text file (whose format is sure to change in the next few months) stating whether the test succeeded or failed.

- The diff files show the differences between this testrun and the last known good output.

- Each VM's serial (sanitized) console log (eg west.console.txt)

- Each VM's unsanitized verbose console output (eg west.console.verbose.txt)

- A network capture from the bridge device (eg swan12.pcap)

- Each VM's pluto log, created with plutodebug=all (eg west.pluto.log)

- Any core dumps generated if a pluto daemon crashed

- testing/baseconfigs/

- configuration files installed on guest machines

- testing/guestbin/

- shell scripts used by tests, and run on the guest

- testing/linux-system-roles.vpn/

- ???

- testing/packaging/

- ???

- testing/pluto/TESTLIST

- list of tests, and their expected outcome

- testing/pluto/*/

- individual test directories

- testing/programs/

- executables used by tests, and run on the guest

- testing/sanitizers/

- filters for cleaning up the test output

- testing/utils/

- test drivers and other host tools

- testing/x509/

- certificates, scripts are run on a guest

Network Diagram

- interface-0 (eth0, vio0, vioif0) is connected to SWANDEFAULT which has a NAT gateway to the internet

- the exceptions are the Linux test domains: EAST, WEST, ROAD, NORTH; should they?

- the BSD domains always up inteface-0 so that /pool, /source, and /testing can be NFS mounted

- NIC needs to run DHCP on eth0 manually; how?

- transmogrify does not try to modify interface-0(SWANDEFAULT) (doing so would break established network sessions such as NFS)

- the interface names do not have consistent order (see comment above about Fedora's interface-0 not pointing at SWANDEFAULT)

- Fedora has ethN

- OpenBSD has vioN (different order)

- NetBSD has vioifN (different order)

LEFT RIGHT

192.0.3.0/24 -------------------------------------+-- 2001:db8:0:3::/64 (198.18.33)

|

2001:db8:0:3::254

192.0.3.254(eth0)

ROAD NORTH

192.1.3.209(eth0) 192.1.3.33(eth1)

2001:db8:1:3::209 2001:db8:1:3::33

| |

192.1.3.0/24 -----+----------------+--------------+-- 2001:db8:1:3::/64 (198.18.3

|

2001:db8:1:3::254

192.1.3.254(eth2)

NIC---(swan0)198.19/24

192.1.2.254(eth1)

2001:db8:1:2::254

|

192.1.2.0/24 -----------------+----+----------------------+----- 2001:db8:1:2::/64 (198.18.2)

| |

| 2001:db8:1:2::23

| 192.1.2.23

| (eth1)

| 198.18.23.23/24(ipsec)--EAST--198.19/24(swan0?)

| (eth0/2?)

| 192.0.2.254

| 2001:db8:0:2::254

| |

| |

2001:db8:1:2::45 |

192.1.2.45 |

(eth1) |

192.18.45.45/24(ipsec)--WEST--(swan0?)198.19/23 |

(eth0/2?) |

192.0.1.254 |

2001:db8:0:1::254 |

| |

| TEST-NET-1

| 192.0.2.0/24 -------+---+-- 2001:db8:0:2::/64 (198.18.23)

| |

| 2001:db8:0:2::12/64

| 192.0.2.12/24

| (eth1)

| 198.18.12.12/24(ipsec)--RISE--(swan0)198.19/16

| (eth2)

| 2001:db8:1::12/64

| 198.18.1.12/24

| |

192.0.1.0/24 -----------------+-+---- 2001:db8:0:1::/64 | (198.18.45)

| |

001:db8:0:1::15/64 |

192.0.1.15/24 |

(eth1) |

192.18.15.15/24(ipsec)--SET--(swan0)198.19/16 |

(eth2) |

2001:db8:1::15/64 |

198.18.1.15/24 |

| |

198.18.1.0/24 ------------------+-----------------------------+----- 2001:db8:1::/64

Problems with the existing Network

The current network has a number of limitations. This section identifies those problems and proposes changes to address them:

- the gateway had only 128 DHCP addresses

- public networks are being used: 192.0.1.0/24 is owned by elevatedcomputing.com; 192.1.2.0/24 is owned by raytheon.com; 192.1.3.0/24 is owned by raytheon.com

- 192.168.234.0/24 (gateway) is reserved for private use networks and known to clash with toronto airport

- using public interfaces means that they can't be used in documentation

- can't test two hosts where each is behind a gateway VPN

see Ref https://www.rfc-editor.org/rfc/rfc5737 https://www.rfc-editor.org/rfc/rfc3849 https://www.rfc-editor.org/rfc/rfc6890

The suggestion is:

- use the benchmarking network 198.18.0.0/15

- reserve 198.19/16 for the gateway

- revive [sun]RISE (behind EAST) and [sun]SET (behind WEST)

- reserve a number N for each machine / network: EAST: 23; WEST: 45; RISE: 123; SET: 145; NORTH: 33; NIC: 254

- use 198.18.2N.N/24 for IPsec Interfaces

- use 192.18.1N.1N/24 for RISE and SET

LEFT RIGHT

198.18.254.0/24 ----------------------------------+-------------- 2001:db8:254::/64

|

2001:db8:254::254

198.18.254.254

(eth0)

ROAD NORTH

198.18.3.209 198.18.3.33

(eth0) (eth1)

2001:db8:3::209 2001:db8:3::33

| |

198.18.3.0/254 --------+----------------+---------+---------------- 2001:db8:3::/64

|

2001:db8:3::254

198.18.3.254

(eth2)

NIC---(swan0)198.19/16

(eth1)

198.18.2.254

2001:db8:2::254

|

198.18.2.0/24 ----------------+---------+-----------------+-------- 2001:db8:2::/64

| |

| 2001:db8:2::23

| 198.18.2.23

| (eth1)

| 198.18.23.23/24(ipsec)--EAST--(swan0?)198.19/24

| (eth0/2?)

| 192.0.2.23

| 2001:db8:0:2::23

| |

2001:db8:2::45 |

198.18.2.45 |

(eth1) |

192.18.45.45/24(ipsec)--WEST--(swan0?)198.19/23 |

(eth0/2?) |

192.0.1.45 |

001:db8:0:1::45 |

| |

| TEST-NET-1

| 192.0.2.0/24 -------+---+-- 2001:db8:0:2::/64

| |

| 2001:db8:0:2::12/64

| 192.0.2.12/24

| (eth1)

| 198.18.12.12/24(ipsec)--RISE--(swan0)198.19/16

| (eth2)

| 2001:db8:1::12/64

| 198.18.1.12/24

| |

192.0.1.0/24 -----------------+-+---- 2001:db8:0:1::/64 |

| |

001:db8:0:1::15/64 |

192.0.1.15/24 |

(eth1) |

192.18.15.15/24(ipsec)--SET--(eth0)198.19/16 |

(eth2) |

2001:db8:1::15/64 |

198.18.1.15/24 |

| |

198.18.1.0/24 ------------------+-----------------------------+----- 2001:db8:1::/64