Test Suite: Difference between revisions

Paul Wouters (talk | contribs) (Initial test suite documentation) |

Paul Wouters (talk | contribs) mNo edit summary |

||

| Line 104: | Line 104: | ||

All the test cases involving VMs are located in the libreswan directory under testing/pluto/ . The most basic test case is called basic-pluto-01. Each test case consists of a few files: | All the test cases involving VMs are located in the libreswan directory under testing/pluto/ . The most basic test case is called basic-pluto-01. Each test case consists of a few files: | ||

* description.txt to explain what this test case actually tests | |||

* ipsec.conf files - for host west is called west.conf. This can also include configuration files for strongswan or racoon2 for interop testig | |||

* ipsec.secret files - if non-default configurations are used. also uses the host syntax, eg west.secrets, east.secrets. | |||

* An init.sh file for each VM that needs to start (eg westinit.sh, eastinit.sh, etc) | |||

* One run.sh file for the host that is the initiator (eg westrun.sh) | |||

* Known good (sanitized) output for each VM (eg west.console.txt, east.console.txt) | |||

* testparams.sh if there are any non-default test parameters | |||

| Line 128: | Line 128: | ||

</pre> | </pre> | ||

* RESULT is a text file (whose format is sure to change in the next few months) stating wether the test succeeded or failed. | |||

* The diff files show the differences between this testrun and the last known good output. | |||

* Each VM's serial (sanitized) console log (eg west.console.txt) | |||

* Each VM's unsanitized verbose console output (eg west.console.verbose.txt) | |||

* A network capture from the bridge device (eg swan12.pcap) | |||

* Each VM's pluto log, created with plutodebug=all (eg west.pluto.log) | |||

* Any core dumps generated if a pluto daemon crashed | |||

== Diagnosing inside the VM == | == Diagnosing inside the VM == | ||

Revision as of 04:00, 20 February 2014

Libreswan comes with an extensive test suite, written mostly in python, that uses KVM virtual machines and virtual networks. It has replaced the old UML test suite. Apart from KVM, the test suite uses libvirtd and qemu. It is strongly recommended to run the test suite natively on the OS (not in a VM itself) on a machine that has a CPU wth virtualization instructions. The PLAN9 filesystem (9p) is used to mount host directories in the guests - NFS is avoided to prevent network lockups when an IPsec test case would cripple the guest's networking.

| libvirt 0.9.11 and qemu 1.0 or better are required. RHEL does not support a writable 9p filesystem, so the recommended host/guest OS is Fedora 20 |

Preparing the host machine

Nothing apart from the system services requires root access. However, it does require that the user you are using is allowed to run various commands as root via sudo. Additionally, libvirt assumes the VMs are running under the qemu uid, but because we want to share files using the 9p filesystem between host and guests, we want the VMs to run under our own uid. The easiest solution to accomplish all of these is to add your user (for example the username "build") to the kvm, qemu and wheel groups. These are the changed lines from /etc/groups:

wheel:x:10:root,build kvm:x:36:qemu,root,qemu,build qemu:x:107:root,qemu,build

And the file /etc/sudoers would have a line:

%wheel ALL=(ALL) NOPASSWD: ALL

You might need to relogin for all group changes to take effect.

Now we are ready to install the various components of libvirtd, qemu and kvm and then start the libvirtd service.

sudo yum install virt-manager qemu-system-x86 libvirt-daemon-driver-qemu qemu qemu-kvm \

libvirt-daemon-qemu qemu-img qemu-user libvirt-daemon-qemu libvirt-python

sudo systemctl enable libvirtd.service

sudo systemctl start libvirtd.service

| Because our VMs don't run as qemu, /var/lib/libvirt/qemu needs to be changed using chmod to make it writable for your user |

Various tools are used or convenient to have when running tests:

sudo yum install python-pexpect git tcpdump

The libreswan source tree includes all the components that are used on the host and inside the test VMs. To get the latest source code using git:

git clone https://github.com/libreswan/libreswan cd libreswan

Creating the VMs

A configuration file called kvmsetup.sh is used to configure a few parameters for the test suite:

cp kvmsetup.sh.sample kvmsetup.sh

This file contains various environment variables used for creating and running the tests. In the example version, the KVMPREFIX= is set to the home directory of the user "build". The POOLSPACE= is where all the VM images will be stored. There should be at least 16GB of free disk space in the pool/ directory. You can change the OSTYPE= if you prefer to use ubuntu guests over fedora guests. We recommend that the host and guest run the same OS - it makes things like running gdb on the host for core dumps created in the guests much easier. The OSMEDIA= can be changed to point to a local distribution mirror.

Once the kvmsetup.sh file has been edited, we can create the VMs:

sh testing/libvirt/install.sh

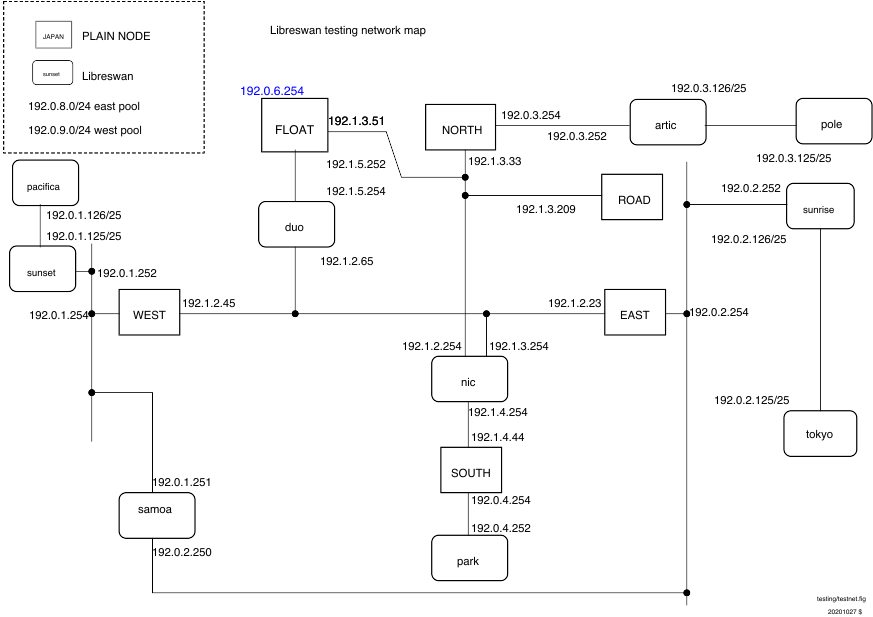

First, a new VM is added to the system called "fedorabase" (or "ubuntubase"). This is an automated minimal install using kickstart. Once installed, the disk image is converted to QCOW and copied for each test VM, west, east, north, road and nic. A few virtual networks are created to hook up the VMs in isolation. These virtual networks have names like "192_1_2_0" and use bridge interfaces names like "swan12". Fincally, the actual VMs are added to the system's libvirt/KVM system and the "fedorabase" VM is deleted.

Since you will be using ssh a lot to login to these machines, it is recommended to put their names in /etc/hosts:

# /etc/hosts entries for libreswan test suite 192.1.2.45 west 192.1.2.23 east 192.0.3.254 north 192.1.3.209 road 192.1.2.254 nic

Preparing the VMs

The VMs came pre-installed with everything, except libreswan. We do not want to use the OS libreswan package because we want to run our own version to test our code changes. Some of the test cases use the NETKEY/XFRM IPsec stack but most test cases use the KLIPS IPsec stack. Login to the first VM and compile and install the libreswan userland and KLIPS ipsec kernel module:

[biuld@host:~/libreswan $ ssh root@west [...] [root@west source]# swan-update

swan-update first builds libreswan and then installs libreswan. For the other VMs (except "nic" which never runs IPsec) we only need to install, as libreswan is already built:

for host in east north road; do ssh root@$host swan-install

All VMs are now fully provisioned to run test cases.

| The directories /source and /testing inside any VM are automatically mounted from the host's libreswan directory. Do not move the libreswan or the pool space directory on the host |

Running a test case

All the test cases involving VMs are located in the libreswan directory under testing/pluto/ . The most basic test case is called basic-pluto-01. Each test case consists of a few files:

- description.txt to explain what this test case actually tests

- ipsec.conf files - for host west is called west.conf. This can also include configuration files for strongswan or racoon2 for interop testig

- ipsec.secret files - if non-default configurations are used. also uses the host syntax, eg west.secrets, east.secrets.

- An init.sh file for each VM that needs to start (eg westinit.sh, eastinit.sh, etc)

- One run.sh file for the host that is the initiator (eg westrun.sh)

- Known good (sanitized) output for each VM (eg west.console.txt, east.console.txt)

- testparams.sh if there are any non-default test parameters

You can run this test case by issuing the following command on the host:

cd testing/pluto/basic-pluto-01/ ../../utils/utils/dotest.sh

Once the testrun has completed, you will see an OUTPUT/ directory in the test case directory:

$ ls OUTPUT/ east.console.diff east.console.verbose.txt RESULT west.console.txt west.pluto.log east.console.txt east.pluto.log swan12.pcap west.console.diff west.console.verbose.txt

- RESULT is a text file (whose format is sure to change in the next few months) stating wether the test succeeded or failed.

- The diff files show the differences between this testrun and the last known good output.

- Each VM's serial (sanitized) console log (eg west.console.txt)

- Each VM's unsanitized verbose console output (eg west.console.verbose.txt)

- A network capture from the bridge device (eg swan12.pcap)

- Each VM's pluto log, created with plutodebug=all (eg west.pluto.log)

- Any core dumps generated if a pluto daemon crashed

Diagnosing inside the VM

Once a testrun has completed, the VMs shut down the ipsec subsystem. You can use ssh to login as root on any host (password "swan") and rerun the testcase manually. This gives you a change to repeat a crasher while using gdb:

ssh root@east ipsec setup start pidof pluto cd /source/OBJ* gdb programs/pluto/pluto gdb> attach <pid> gdb> cont

in another window, you can login to east and re-trigger the failure. You can either use the root command history using the arrow keys to start ipsec and load the right connection, or you can re-run the "init.sh" file:

ssh root@west cd /testing/pluto/basic-pluto-01 sh ./westinit.sh

You can either continue with running "westrun.sh" or you can look at westrun.sh and issue the commands manually.

Various notes

Currently, only one test can run at a time. You can peek at the guests using virt-manager or you can ssh into the test machines from the host.