Test Suite: Difference between revisions

(Clarify that /etc/modules-load.d/virtio.conf is needed not just for entropy.) |

m (On Fedora, dnf has replaced yum) |

||

| Line 192: | Line 192: | ||

<pre> | <pre> | ||

dnf install httpd | |||

systemctl enable httpd | systemctl enable httpd | ||

systemctl start httpd | systemctl start httpd | ||

| Line 495: | Line 495: | ||

Strongswan has dependency libtspi.so.1 | Strongswan has dependency libtspi.so.1 | ||

<pre> | <pre> | ||

sudo | sudo dnf install trousers | ||

sudo rpm -ev strongswan | sudo rpm -ev strongswan | ||

sudo rpm -ev strongswan-libipsec | sudo rpm -ev strongswan-libipsec | ||

Revision as of 16:30, 18 October 2018

Libreswan comes with an extensive test suite, written mostly in python, that uses KVM virtual machines and virtual networks. It has replaced the old UML test suite. Apart from KVM, the test suite uses libvirtd and qemu. It is strongly recommended to run the test suite natively on the OS (not in a VM itself) on a machine that has a CPU wth virtualization instructions. The PLAN9 filesystem (9p) is used to mount host directories in the guests - NFS is avoided to prevent network lockups when an IPsec test case would cripple the guest's networking.

| libvirt 0.9.11 and qemu 1.0 or better are required. RHEL does not support a writable 9p filesystem, so the recommended host/guest OS is Fedora 22 |

Test Frameworks

This page describes the make kvm framework.

Instead of using virtual machines, it is possible to use Docker instances.

More information is found in Test Suite - Docker in this Wiki

Preparing the host machine

In the following it is assumed that your account is called "build".

Add Yourself to sudo

The test scripts rely on being able to use sudo without a password to gain root access. This is done by creating a no-pasword rule to /etc/sudoers.d/.

XXX: Surely qemu can be driven without root?

To set this up, add your account to the wheel group and permit wheel to have no-password access. Issue the following commands as root:

echo '%wheel ALL=(ALL) NOPASSWD: ALL' > /etc/sudoers.d/swantest chmod 0440 /etc/sudoers.d/swantest chown root.root /etc/sudoers.d/swantest usermod -a -G wheel build

Disable SELinux

SELinux blocks some actions that we need. We have not created any SELinux rules to avoid this.

Either set it to permissive:

sudo sed --in-place=.ORIG -e 's/^SELINUX=.*/SELINUX=permissive/' /etc/selinux/config sudo setenforce Permissive

Or disabled:

sudo sed --in-place=.ORIG -e 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config sudo reboot

Install Required Dependencies

Now we are ready to install the various components of libvirtd, qemu and kvm and then start the libvirtd service.

Even virt-manager isn't strictly required.

On Fedora 28:

# is virt-manager really needed sudo dnf install -y qemu virt-manager virt-install libvirt-daemon-kvm libvirt-daemon-qemu sudo dnf install -y python3-pexpect

Once all is installed start libvirtd:

sudo systemctl enable libvirtd sudo systemctl start libvirtd

On Debian?

Install Utilities (Optional)

Various tools are used or convenient to have when running tests:

Optional packages to install on Fedora

sudo dnf -y install git patch tcpdump expect python-setproctitle python-ujson pyOpenSSL python3-pyOpenSSL sudo dnf install -y python2-pexpect python3-setproctitle diffstat

Optional packages to install on Ubuntu

apt-get install python-pexpect git tcpdump expect python-setproctitle python-ujson \

python3-pexpect python3-setproctitle

| do not install strongswan-libipsec because you won't be able to run non-NAT strongswan tests! |

Setting Users and Groups

You need to add yourself to the qemu group. For instance:

sudo usermod -a -G qemu $(id -u -n)

You will need to re-login for this to take effect.

The path to your build needs to be accessible (executable) by root:

chmod a+x ~

Fix /var/lib/libvirt/qemu

| Because our VMs don't run as qemu, /var/lib/libvirt/qemu needs to be changed using chmod g+w to make it writable for the qemu group. This needs to be repeated if the libvirtd package is updated on the system |

sudo chmod g+w /var/lib/libvirt/qemu

Create /etc/modules-load.d/virtio.conf

Several virtio modules need to be loaded into the host's kernel. This could be done by modprobe ahead of running any virtual machines but it is easier to install them whenever the host boots. This is arranged by listing the modules in a file within /etc/modules-load.d. The host must be rebooted for this to take effect.

sudo dd <<EOF of=/etc/modules-load.d/virtio.conf virtio_blk virtio-rng virtio_console virtio_net virtio_scsi virtio virtio_balloon virtio_input virtio_pci virtio_ring 9pnet_virtio EOF

Ensure that the host has enough entropy

With KVM, a guest systems uses entropy from the host through the kernel module "virtio_rng" in the guest's kernel (set above). This has advantages:

- entropy only needs to be gathered on one machine (the host) rather than all machines (the host and the guests)

- the host is in the Real World and thus has more sources of real entropy

- any hacking to make entropy available need only be done on one machine

To ensure the host has enough randomness, run either jitterentropy-rngd or havegd.

Fedora commands for using jitterentropy-rngd (broken on F26, service file specifies /usr/local for path):

sudo dnf install jitterentropy-rngd sudo systemctl enable jitterentropy-rngd sudo systemctl start jitterentropy-rngd

Fedora commands for using havegd:

sudo dnf install haveged sudo systemctl enable haveged sudo systemctl start haveged

Fetch Libreswan

The libreswan source tree includes all the components that are used on the host and inside the test VMs. To get the latest source code using git:

git clone https://github.com/libreswan/libreswan cd libreswan

Create the Pool directory - KVM_POOLDIR

The pool directory is used used to store KVM disk images and other configuration files. By default $(top_srcdir)/../pool is used (that is, adjacent to your source tree).

To change the location of the pool directory, set the KVM_POOLDIR make variable in Makefile.inc.local. For instance:

$ grep KVM_POOLDIR Makefile.inc.local KVM_POOLDIR=/home/libreswan/pool

Serve test results as HTML pages on the test server (optional)

If you want to be able to see the results of testruns in HTML, you can enable a webserver:

dnf install httpd systemctl enable httpd systemctl start httpd mkdir /var/www/html/results/ chown build /var/www/html/results/ chmod 755 /var/www/html/results/ cd ~ ln -s /var/www/html/results

If you want it to be the main page of the website, you can create the file /var/www/html/index.html containing:

<!DOCTYPE HTML PUBLIC "-//W3C//DTD HTML 4.0 Transitional//EN"> <html> <head> <meta http-equiv="REFRESH" content="0;url=/results/"></HEAD> <BODY> </BODY> </HTML>

and then add:

WEB_SUMMARYDIR=/var/www/html/results

To Makefile.inc.local

Set up KVM and run the Testsuite (for the impatient)

If you're impatient, and want to just run the testsuite using kvm then:

- install (or update) libreswan (if needed this will create the test domains):

- make kvm-install

- run the testsuite:

- make kvm-test

- list the kvm make targets:

- make kvm-help

After that, the following make targets are useful:

- clean the kvm build tree

- make kvm-clean

- clean the kvm build tree and all kvm domains

- make kvm-purge

Running the testsuite

Generating Certificates

The full testsuite requires a number of certificates. The virtual domains are configured for this purpose. Just use:

make kvm-keys

( Before pyOpenSSL version 0.15 you couldn't run dist_certs.py without a patch to support creating SHA1 CRLs. A patch for this can be found at https://github.com/pyca/pyopenssl/pull/161 )

Run the testsuite

To run all test cases (which include compiling and installing it on all vms, and non-VM based test cases), run:

make kvm-install kvm-test

Stopping pluto tests (gracefully)

If you used "make kvm-test", type control-C; possibly repeatedly.

Shell and Console Access (Logging In)

There are several different ways to gain shell access to the domains.

Each method, depending on the situation, has both advantages and disadvantages. For instance:

- while make kvmsh-host provide quick access to the console, it doesn't support file copy

- while SSH takes more to set up, it supports things like proper terminal configuration and file copy

Serial Console access using "make kvmsh-HOST" (kvmsh.py)

"kvmsh", is a wrapper around "virsh". It automatically handles things like booting the machine, logging in, and correctly configuring the terminal:

$ ./testing/utils/kvmsh.py east [...] Escape character is ^] [root@east ~]# printenv TERM xterm [root@east ~]# stty -a ...; rows 52; columns 185; ... [root@east ~]#

"kvmsh.py" can also be used to script remote commands (for instance, it is used to run "make" on the build domain):

$ ./testing/utils/kvmsh.py east ls [root@east ~]# ls anaconda-ks.cfg

Finally, "make kvmsh-HOST" provides a short cut for the above; and if your using multiple build trees (see further down), it will connect to the DOMAIN that corresponds to HOST. For instance, notice how the domain "a.east" is passed to kvmsh.py in the below:

$ make kvmsh-east /home/libreswan/pools/testing/utils/kvmsh.py --output ++compile-log.txt --chdir . a.east Escape character is ^] [root@east source]#

Limitations:

- no file transfer but files can be accessed via /testing

Graphical Console access using virt-manager

"virt-manager", a gnome tool can be used to access individual domains.

While easy to use, it doesn't support cut/paste or mechanisms for copying files.

Shell access using SSH

While requiring slightly more effort to set up, it provides full shell access to the domains.

Since you will be using ssh a lot to login to these machines, it is recommended to either put their names in /etc/hosts:

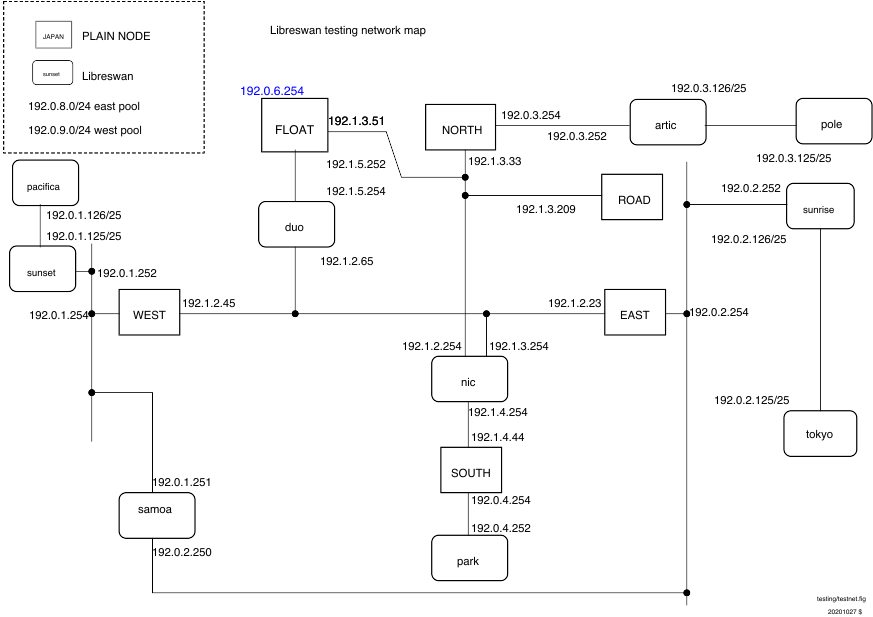

# /etc/hosts entries for libreswan test suite 192.1.2.45 west 192.1.2.23 east 192.0.3.254 north 192.1.3.209 road 192.1.2.254 nic

or add entries to .ssh/config such as:

Host west

Hostname 192.1.2.45

If you wish to be able to ssh into all the VMs created without using a password, add your ssh public key to testing/baseconfigs/all/etc/ssh/authorized_keys. This file is installed as /root/.ssh/authorized_keys on all VMs

Using ssh becomes easier if you are running ssh-agent (you probably are) and your public key is known to the virtual machine. This command, run on the host, installs your public key on the root account of the guest machines west. This assumes that west is up (it might not be, but you can put this off until you actually need ssh, at which time the machine would need to be up anyway). Remember that the root password on each guest machine is "swan".

ssh-copy-id root@west

You can use ssh-copy for any VM. Unfortunately, the key is forgotten when the VM is restarted.

Run an individual test (or tests)

All the test cases involving VMs are located in the libreswan directory under testing/pluto/ . The most basic test case is called basic-pluto-01. Each test case consists of a few files:

- description.txt to explain what this test case actually tests

- ipsec.conf files - for host west is called west.conf. This can also include configuration files for strongswan or racoon2 for interop testig

- ipsec.secret files - if non-default configurations are used. also uses the host syntax, eg west.secrets, east.secrets.

- An init.sh file for each VM that needs to start (eg westinit.sh, eastinit.sh, etc)

- One run.sh file for the host that is the initiator (eg westrun.sh)

- Known good (sanitized) output for each VM (eg west.console.txt, east.console.txt)

- testparams.sh if there are any non-default test parameters

You can run this test case by issuing the following command on the host:

Either:

make kvm-test KVM_TESTS+=testing/pluto/basic-pluto-01/

or:

./testing/utils/kvmtest.py testing/pluto/basic-pluto-01

multiple tests can be selected with:

make kvm-test KVM_TESTS+=testing/pluto/basic-pluto-*

or:

./testing/utils/kvmresults.py testing/pluto/basic-pluto-*

Once the test run has completed, you will see an OUTPUT/ directory in the test case directory:

$ ls OUTPUT/ east.console.diff east.console.verbose.txt RESULT west.console.txt west.pluto.log east.console.txt east.pluto.log swan12.pcap west.console.diff west.console.verbose.txt

- RESULT is a text file (whose format is sure to change in the next few months) stating whether the test succeeded or failed.

- The diff files show the differences between this testrun and the last known good output.

- Each VM's serial (sanitized) console log (eg west.console.txt)

- Each VM's unsanitized verbose console output (eg west.console.verbose.txt)

- A network capture from the bridge device (eg swan12.pcap)

- Each VM's pluto log, created with plutodebug=all (eg west.pluto.log)

- Any core dumps generated if a pluto daemon crashed

Debugging inside the VM

Debugging pluto on east

Terminal 1 - east: log into east, start pluto, and attach gdb

make kvmsh-east east# cd /testing/pluto/basic-pluto-01 east# sh -x ./eastinit.sh east# gdb /usr/local/libexec/ipsec/pluto $(pidof pluto) (gdb) c

Terminal 2 - west: log into west, start pluto and the test

make kvmsh-west west# sh -x ./westinit.sh ; sh -x westrun.sh

If pluto wasn't running, gdb would complain: --p requires an argument

When pluto crashes, gdb will show that and await commands. For example, the bt command will show a backtrace.

Debugging pluto on west

See above, but also use virt as a terminal.

/root/.gdbinit

If you want to get rid of the warning "warning: File "/testing/pluto/ikev2-dpd-01/.gdbinit" auto-loading has been declined by your `auto-load safe-path'"

echo "set auto-load safe-path /" >> /root/.gdbinit

Updating the VMs

- delete all the copies of the base VM:

- $ make kvm-purge

- install again

- $ make kvm-install

The /testing/guestbin directory

The guestbin directory contains scripts that are used within the VMs only.

swan-transmogrify

When the VMs were installed, an XML configuration file from testing/libvirt/vm/ was used to configure each VM with the right disks, mounts and nic cards. Each VM mounts the libreswan directory as /source and the libreswan/testing/ directory as /testing . This makes the /testing/guestbin/ directory available on the VMs. At boot, the VMs run /testing/guestbin/swan-transmogrify. This python script compares the nic of eth0 with the list of known MAC addresses from the XML files. By identifying the MAC, it knows which identity (west, east, etc) it should take on. Files are copied from /testing/baseconfigs/ into the VM's /etc directory and the network service is restarted.

swan-build, swans-install, swan-update

These commands are used to build, install or build+install (update) the libreswan userland and kernel code

swan-prep

This command is run as the first command of each test case to setup the host. It copies the required files from /testing/baseconfigs/ and the specific test case files onto the VM test machine. It does not start libreswan. That is done in the "init.sh" script.

The swan-prep command takes two options. The --x509 option is required to copy in all the required certificates and update the NSS database. The --46 /--6 option is used to give the host IPv4 and/or IPv6 connectivity. Hosts per default only get IPv4 connectivity as this reduces the noise captured with tcpdump

fipson and fipsoff

These are used to fake a kernel into FIPS mode, which is required for some of the tests.

Various notes

- Currently, only one test can run at a time.

- You can peek at the guests using virt-manager or you can ssh into the test machines from the host.

- ssh may be slow to prompt for the password. If so, start up the vm "nic"

- On VMs use only one CPU core. Multiple CPUs may cause pexpect to mangle output.

- 2014 Mar: DHR needed to do the following to make things work each time he rebooted the host

$ sudo setenforce Permissive $ ls -ld /var/lib/libvirt/qemu drwxr-x---. 6 qemu qemu 4096 Mar 14 01:23 /var/lib/libvirt/qemu $ sudo chmod g+w /var/lib/libvirt/qemu $ ( cd testing/libvirt/net ; for i in * ; do sudo virsh net-start $i ; done ; )

- to make the SELinux enforcement change persist across host reboots, edit /etc/selinux/config

- to remove "169.254.0.0/16 dev eth0 scope link metric 1002" from "ipsec status output"

echo 'NOZEROCONF=1' >> /etc/sysconfig/network

Need Strongswan 5.3.2 or later

The baseline Strongswan needed for our interop tests is 5.3.2. This isn't part of Fedora or RHEL/CentOS at this time (2015 September).

Ask Paul for a pointer to the required RPM files.

Strongswan has dependency libtspi.so.1

sudo dnf install trousers sudo rpm -ev strongswan sudo rpm -ev strongswan-libipsec sudo rpm -i strongswan-5.2.0-4.fc20.x86_64.rpm

To update to a newer verson, place the rpm in the source tree on the host machine. This avoids needing to connect the guests to the internet. Then start up all the machines, wait until they are booted, and update the Strongswan package on each machine. (DHR doesn't know which machines actually need a Strongswan.)

for vm in west east north road ; do sudo virsh start $vm; done # wait for booting to finish for vm in west east north road ; do ssh root@$vm 'rpm -Uv /source/strongswan-5.3.2-1.0.lsw.fc21.x86_64.rpm' ; done

To improve

- install and remove RPM using swantest + make rpm support

- add summarizing script that generate html/json to git repo

- cordump. It has been a mystery :) systemd or some daemon appears to block coredump on the Fedora 20 systems.

- when running multiple tests from TESTLIST shutdown the hosts before copying OUTPUT dir. This way we get leak detect inf. However, for single test runs do not shut down.

IPv6 tests

IPv6 test cases seems to work better when IPv6 is disabled on the KVM bridge interfaces the VMs use. The bridges are swanXX and their config files are /etc/libvirt/qemu/networks/192_0_1.xml . Remove the following line from it. Reboot/restart libvirt.

libvirt/qemu/networks/192_0_1.xml <ip family="ipv6" address="2001:db8:0:1::253" prefix="64"/>

and ifconfig swan01 should have no IPv6 address, no fe:80 or any v6 address. Then the v6 testcases should work.

please give me feedback if this hack work for you. I shall try to add more info about this.

Sanitizers

- summarize output from tcpdump

- count established IKE, ESP , AH states (there is count at the end of "ipsec status " that is not accurate. It counts instantiated connection as loaded.

- dpd ping sanitizer. DPD tests have unpredictable packet loss for ping.

Publishing Results on the web: http://testing.libreswan.org/results/

This is experimental and uses:

- CSS

- javascript

Two scripts are available:

- testing/web/setup.sh

- sets up the directory ~/results adding any dependencies

- testing/web/publish.sh

- runs the testsuite and then copies the results to ~/results

To view this, use file:///.

To get this working with httpd (Apache web server):

sudo systemctl enable httpd sudo systemctl start httpd sudo ln -s ~/results /var/www/html/ sudo sh -c 'echo "AddType text/plain .diff" >/etc/httpd/conf.d/diff.conf'

To view the results, use http://localhost/results.

Speeding up "make kvm-test" by running things in parallel

Internally kvmrunner.py has two work queues:

- a pool of reboot threads; each thread reboots one domain at a time

- a pool of test threads; each thread runs one test at a time using domains with a unique prefix

The test threads uses the reboot thread pool as follows:

- get the next test

- submit required domains to reboot pool

- wait for domains to reboot

- run test

- repeat

My adjusting KVM_WORKERS and KVM_PREFIXES it is possible:

- speed up test runs

- run independent testsuites in parallel

The reboot thread pool - make KVM_WORKERS=...

Booting the domains is the most CPU intensive part of running a test, and trying to perform too many reboots in parallel will bog down the machine to the point where tests time out and interactive performance becomes hopeless. For this reason a pre-sized pool of reboot threads is used to reboot domains:

- the default is 1 reboot thread limiting things to one domain reboot at a time

- KVM_WORKERS specifies the number of reboot threads, and hence, the reboot parallelism

- increasing this allows more domains to be rebooted in parallel

- however, increasing this consumes more CPU resources

To increase the size of the reboot thread pool set KVM_WORKERS. For instance:

$ grep KVM_WORKERS Makefile.inc.local KVM_WORKERS=2 $ make kvm-install kvm-test [...] runner 0.019: using a pool of 2 worker threads to reboot domains [...] runner basic-pluto-01 0.647/0.601: 0 shutdown/reboot jobs ahead of us in the queue runner basic-pluto-01 0.647/0.601: submitting shutdown jobs for unused domains: road nic north runner basic-pluto-01 0.653/0.607: submitting boot-and-login jobs for test domains: east west runner basic-pluto-01 0.654/0.608: submitted 5 jobs; currently 3 jobs pending [...] runner basic-pluto-01 28.585/28.539: domains started after 28 seconds

Only if your machine has lots of cores should you consider adjusting this in Makefile.inc.local.

The tests thread pool - make KVM_PREFIXES=...

Note that this is still somewhat experimental and has limitations:

- stopping parallel tests requires multiple control-c's

- since the duplicate domains have the same IP address, things like "ssh east" don't apply; use "make kvmsh-<prefix><domain>" or "sudo virsh console <prefix><domain" or "./testing/utils/kvmsh.py <prefix><domain>".

Tests spend a lot of their time waiting for timeouts or slow tasks to complete. So that tests can be run in parallel the KVM_PREFIX provides a list of prefixes to add to the host names forming unique domain groups that can each be used to run tests:

- the default is no prefix limiting things to a single global domain pool

- KVM_PREFIXES specifies the domain prefixes to use, and hence, the test parallelism

- increasing this allows more tests to be run in parallel

- however, increasing this consumes more memory and context switch resources

For instance, setting KVM_PREFIXES in Makefile.inc.local to specify a unique set of domains for this directory:

$ grep KVM_PREFIX Makefile.inc.local KVM_PREFIX=a. $ make kvm-install [...] $ make kvm-test [...] runner 0.018: using the serial test processor and domain prefix 'a.' [...] a.runner basic-pluto-01 0.574: submitting boot-and-login jobs for test domains: a.west a.east

And setting KVM_PREFIXES in Makefile.inc.local to specify two prefixes and, consequently, run two tests in parallel:

$ grep KVM_PREFIX Makefile.inc.local KVM_PREFIX=a. b. $ make kvm-install [...] $ make kvm-test [...] runner 0.019: using the parallel test processor and domain prefixes ['a.', 'b.'] [...] b.runner basic-pluto-02 0.632/0.596: submitting boot-and-login jobs for test domains: b.west b.east [...] a.runner basic-pluto-01 0.769/0.731: submitting boot-and-login jobs for test domains: a.west a.east

creates and uses two dedicated domain/network groups (a.east ..., and b.east ...).

Finally, to get rid of all the domains use:

$ make kvm-uninstall

or even:

$ make KVM_PREFIX=b. kvm-uninstall

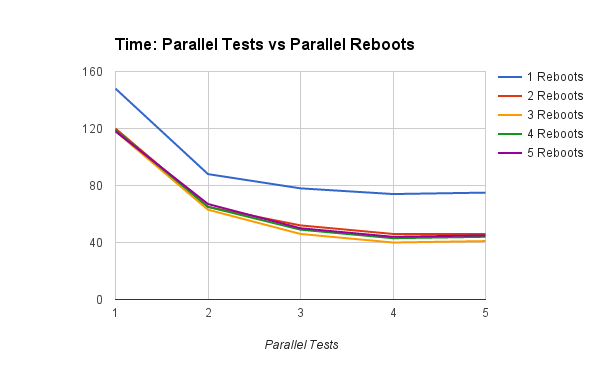

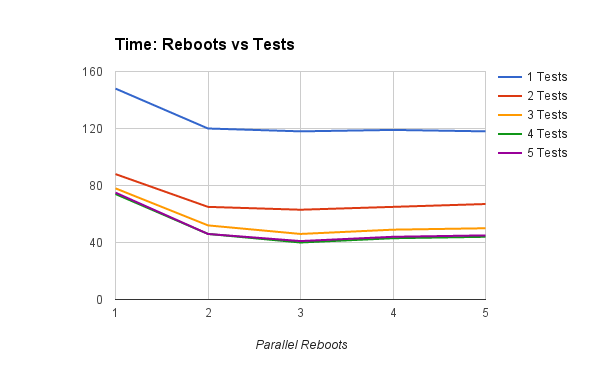

Two domain groups (e.x., KVM_PREFIX=a. b.) seems to give the best results.

Recommendations

Some Analysis

The test system:

- 4-core 64-bit intel

- plenty of ram

- the file mk/perf.sh

Increasing the number of parallel tests, for a given number of reboot threads:

- having #cores/2 reboot threads has the greatest impact

- having more than #cores reboot threads seems to slow things down

Increasing the number of reboots, for a given number of test threads:

- adding a second test thread has a far greater impact than adding a second reboot thread - contrast top lines

- adding a third and even fourth test thread - i.e., up to #cores - still improves things

Finally here's some ASCII art showing what happens to the failure rate when the KVM_PREFIX is set so big that the reboot thread pool is kept 100% busy:

Fails Reboots Time

************ 127 1 6:35 ****************************************

************** 135 2 3:33 *********************

*************** 151 3 3:12 *******************

*************** 154 4 3:01 ******************

Notice how having more than #cores/2 KVM_WORKERS (here 2) has little benefit and failures edge upwards.

Desktop Development Directory

- reduce build/install time - use only one prefix

- reduce single-test time - boot domains in parallel

- use the non-prefix domains east et.al. so it is easy to access the test domains using tools like ssh

Lets assume 4 cores:

KVM_WORKERS=2 KVM_PREFIX=''

You could also add a second prefix vis:

KVM_PREFIX= '' a.

but that, unfortunately, slows down the the build/install time.

Desktop Baseline Directory

- do not overload the desktop - reduce CPU load by booting sequentially

- reduce total testsuite time - run tests in parallel

- keep separate to development directory above

Lets assume 4 cores

- KVM_WORKERS=1

- KVM_PREFIX= b1. b2.

Dedicated Test Server

- minimize total testsuite time

- maximize CPU use

- assume only testsuite running

Assuming 4 cores:

* KVM_WORKERS=2 * KVM_PREFIX= '' t1. t2. t3.